Understanding Language

Language is often viewed as the pinnacle of (human) intelligence. The seamlessness by which machines can be integrated with society depends on their understanding and mastery of language. Our research thus covers domain-specific large-scale language modeling, text manipulation algorithms, argumentation, and causal language. It is studied specifically in the context of conversational AI and connecting knowledge extraction and graphs with goal-driven dialogs, as well as in the context of mining the scientific literature.

Research Focus

Our research in natural language processing and information retrieval focuses on algorithms and models. The overarching challenge is advancing language understanding and manipulation.

Key tasks

- modeling (language representation in a computer),

- paraphrasing (conveying the message of a given text using different words),

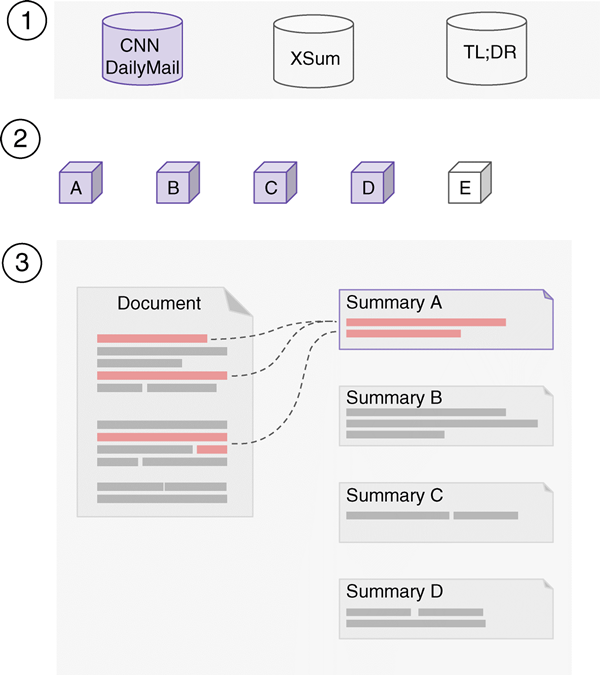

- summarization (conveying the key message of a given text with fewer words),

- argumentation (persuading readers of a claim or a conclusion),

- conversation,

- and causal knowledge.

Artificial Intelligence technologies with the help of increasingly large language resources from web archives fuel the generalization capabilities of these models.

Aims

In the research area “Understanding Language”, we are building domain-specific language models for writing assistance and problem-solving, focusing on latent variables in language models.

- Paraphrasing at paragraph level; we expect to gain insights from summarizing long texts.

- Summarization of research in new domains, such as social media.

- Constrained paraphrasing and summarization; constraints include language simplicity, writing style, and domain-specific requirements.

- Integrating computational argumentation and conversational technologies.

- Causal knowledge acquisition from text for advanced AI reasoning.

- Bias analytics in all of the above; focus on minority protection.